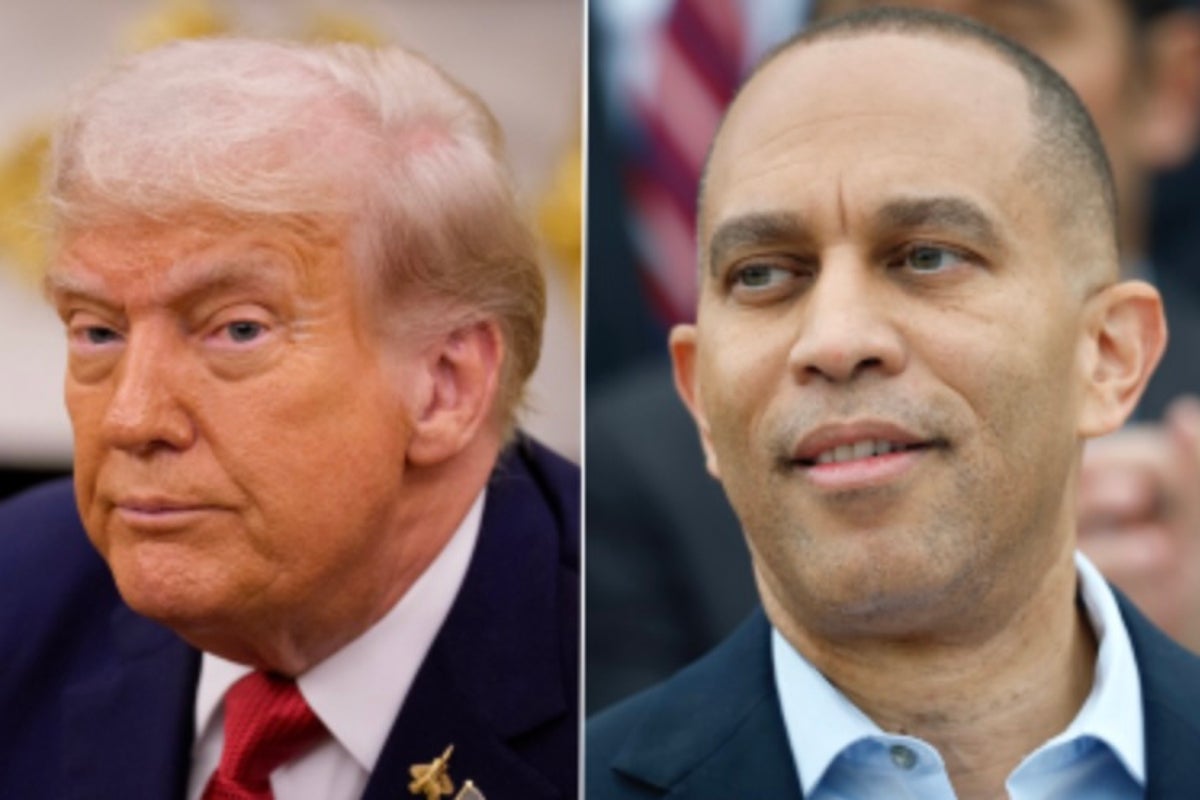

President Trump posted an AI generated deepfake mocking House Minority Leader Hakeem Jeffries hours before a government funding deadline, raising concerns about synthetic media, platform moderation, deepfake detection, and AI regulation in 2025.

President Trump posted an AI generated deepfake that altered footage of House Minority Leader Hakeem Jeffries by adding a sombrero and a handlebar mustache and fabricating remarks just hours before a looming government funding deadline. The post drew swift criticism from Democrats who called it racist and misleading, sparked confrontations on the House floor, and renewed scrutiny over the political and reputational risks of synthetic media and AI misinformation.

Deepfakes are synthetic audio or video created or manipulated by machine learning to make people appear to say or do things they did not. Tools to create convincing deepfake AI clips and AI generated audio are more accessible than ever, lowering the barrier for political actors and bad faith actors to spread manipulated content. In high stakes moments such as budget negotiations or election season, manipulated media can inflame tensions, mislead voters, and complicate fact based debate. Platforms and lawmakers face growing pressure to set clear rules that balance free expression with the need to curb misinformation.

How it works: Modern deepfake technology maps facial features and audio patterns with machine learning models, then synthesizes new frames or speech that closely match the target. Some edits are complex frame by frame replacements while others use overlays or generative editing to produce shareable clips.

Why it spreads: Short videos are easy to share and emotionally salient. When they target public figures, they can rapidly reach both partisan audiences and mainstream outlets whether intended as satire or designed to deceive. Search interest in phrases such as how to detect AI deepfakes in 2025 and deepfake detection has grown alongside concerns about digital provenance and AI regulation 2025.

Political risk and reputational harm: Even superficial edits can be framed to demean or mock, leading to accusations of racism and escalating partisan conflict. Reputational damage can be immediate and amplified by rapid reshares.

Platform moderation dilemma: Social platforms must decide whether to remove label or allow manipulated political content. Enforcement is complicated by context such as satire versus deception timing of the post and scale of similar posts by the same account. Topics like platform strategies for moderating synthetic media and AI powered moderation and freedom of speech balance are now central to policymaker discussions.

Legal and regulatory pressure: Incidents like this increase pressure on lawmakers to set obligations for platforms and consider rules for synthetic media used in political communications. Discussions now include disclosure requirements for AI generated content expedited takedown procedures during critical events and clearer liability frameworks for platform operators.

Operational responses for institutions: Political offices campaigns and newsrooms should invest in rapid verification workflows media provenance tools and clear communication strategies to counter manipulated content in real time. Training staff to spot common manipulation techniques and deploying deepfake detection tools and digital provenance solutions can reduce response time and contain misinformation.

Coverage and experts emphasize the convergence of technology and politics. Synthetic media is no longer a niche technical problem but a mainstream communications risk. This aligns with SEO and digital trends for 2025 that reward topical authority transparency and user intent focus. Using terms like synthetic media AI misinformation deepfake AI and deepfake detection in public guidance and outreach can help audiences find accurate information and resources.

The Trump post altering footage of Hakeem Jeffries is a reminder that synthetic media can have immediate political consequences especially when timed to high stakes events such as a government funding deadline. Moving forward policymakers platforms and political actors will need clearer rules faster verification practices and stronger public education to prevent manipulation from shaping public debate. For institutions the practical takeaway is to assume manipulated media will appear prepare verification workflows and communicate transparently when it does. Will that be enough to preserve trust in public discourse The coming months will be a crucial test.

Suggested resources and search phrases: deepfake AI synthetic media AI misinformation platform moderation deepfake detection how to detect AI deepfakes in 2025 digital provenance AI regulation 2025